Following the devastating earthquake that struck Jajarkot district in Karnali Province in early November, social media users shared AI-generated images claiming to show the devastation from the disaster.

The photo showed dozens of houses amid ruins from the earthquake as people and rescuers walked along the debris.

The photo was initially shared by Meme Nepal. It was soon used by celebrities, politicians and humanitarian organizations keen to draw attention to the disaster in one of Nepal’s poorest regions.

The image was used by Anil Keshary Shah, a former CEO of Nabil Bank and Rabindra Mishra, a senior vice president of Rastriya Prajatantra Party. (See archived version here and here)

When Nepal Check contacted Meme Nepal to find out the original source of the photo, they replied that they had found the image on social media.

While some users appreciated the creative capabilities of AI in producing these images, others expressed concerns about copyright issues and the work that goes into creating real images.

A month on, the AI-generated images supposedly showing the aftermath of the earthquake continues.

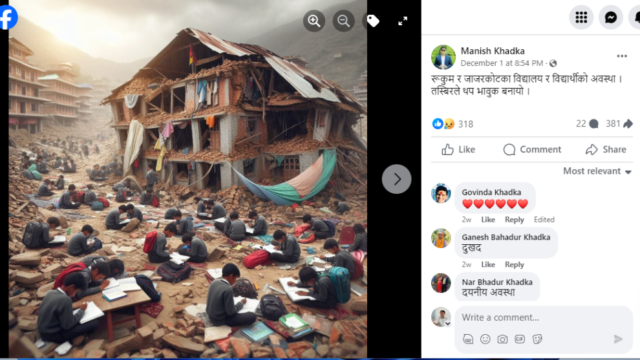

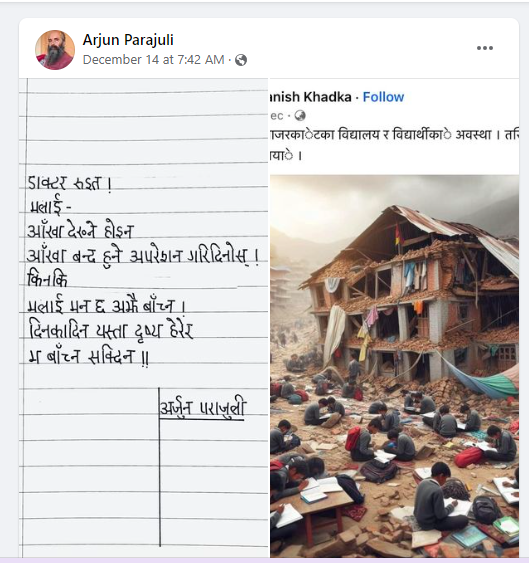

On December 14, 2023, Arjun Parajuli, a Nepali poet and founder of Pathshala Nepal, posted a photo claiming to show students studying at the ruins of the earthquake in Jajarkot. Parajuli, who accompanied the photo with a poem, had reshared the image from Manish Khadka, who identifies himself as a journalist based in Musikot of Rukum district.

Both these viral images, which were poignant, didn’t capture a real moment. They were generated using text-to-image generator platforms such as Midjourney, DALLE.

In the digital age, it’s easy to manipulate the images. With the rise of AI-enabled platforms, it has become possible to quickly generate images on the internet.

AI-generated images have swiftly evolved from amusingly odd to convincingly realistic.

This has posed further challenges to fact-checkers who are already inundated with misleading or false information circulating on social media platforms.

Fact-checkers often rely on Reverse Image Search, a tried and tested tool to detect an image’s veracity. But Google and other search engines only show photos that have been previously published online.

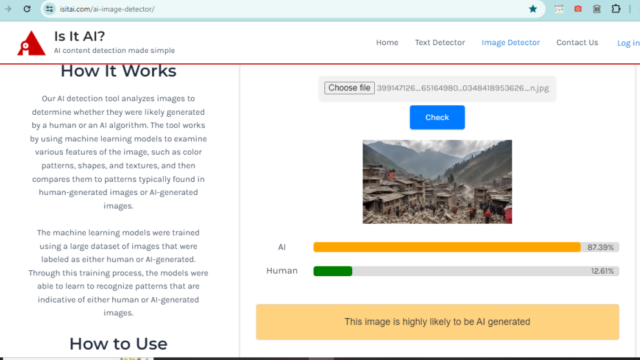

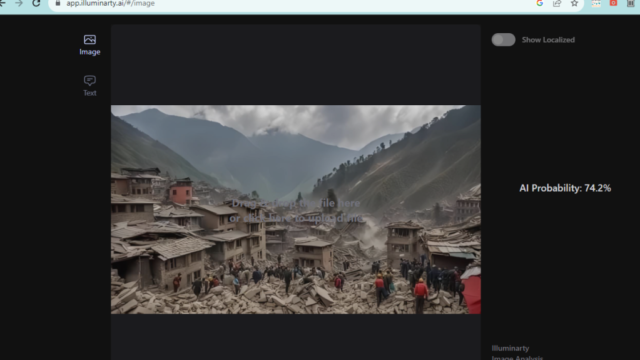

So, how can one ascertain if an image is AI-generated? Currently, there is no tool that can determine this with 100% accuracy.

For example, Nepal Check used Illuminarty.ai, isitai.com to check the earthquake images to find out if they were generated using AI tools. After uploading the image on the platforms, they provide users with a percentage of how likely the image could be generated by an AI.

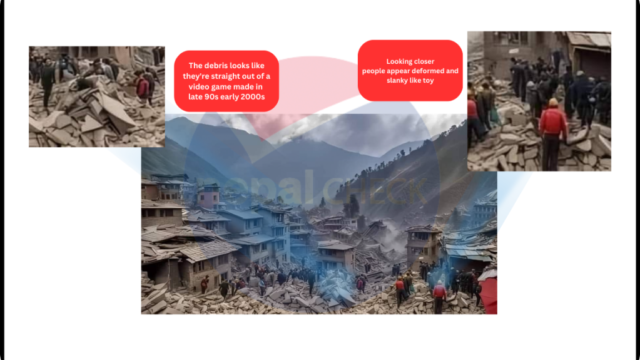

We then contacted Kalim Ahmed, a former fact-checker at AltNews. He made the following observations about the image claiming to show devastation of the earthquake in Jajarkot.

- If you zoom in and take a closer look at the people, they appear deformed and like toys.

- The rocks/debris just at the center look like they’re straight out of a video game made in the late 90s or early 2000s.

Indeed, many experts say that in the absence of a foolproof way to determine whether a photo is AI-generated, using observational skills and finding visual clues are the best way to tackle them.

Experts say a healthy dose of skepticism about what you see online (seeing is no longer believing), a search for the source of the content, whether there’s any evidence attached to the claim and looking for context are powerful ways to separate fact from fiction online.

In a webinar in August this year organized by News Literacy Project, Dan Evan urged users to keep asking questions (is it authentic?). With the AI-images, their surfaces seem unusually smooth, which can be a giveaway, according to him. “Everything looks a little off,” he said.

He suggested looking for visual clues, adding that it was crucial to find out the provenance of the image.

Experts caution that the virality of content on social media often stems from its ability to generate outrage or controversy, highlighting the need for careful consideration when encountering emotionally charged material.

In her comprehensive guide on detecting AI-generated images, Tamoa Calzadilla, a fellow at the Reynold Institute of Journalism in the US, encourages users to pay attention to hashtags that may indicate the use of AI in generating the content.

While AI has made significant progress in generating realistic images, it still faces challenges in accurately replicating human organs, such as eyes and hands.

“That’s why it’s important to examine them closely: Do they have 5 fingers? Are all the contours clear? If they’re holding an object, are they doing so in a normal way?,” she writes in the guide.

Experts recommend that news media disclose information to readers and viewers regarding AI-generated images. Social media users are also advised to share the process publicly to mitigate the spread of misinformation.

Although the images purporting to depict the earthquake in Jajarkot lack a close-up view of the subjects, upon closer examination, it becomes evident that they resemble drawings rather than real humans.

Nepal Check also conducted a comparison between the viral AI-generated images and those disseminated by news media. We couldn’t find that such images had been published in the aftermath of the earthquake.

(Source: https://nepalcheck.org/2023/12/19/)

Comment